Reliable mobility in open, dynamic, large-scale environments is a baseline requirement for real-world robotics deployment. Achieving this capability in autonomous driving alone took multiple decades, and the resulting mobility intelligence has not become a reusable foundation for broader robotics. Every new robot rebuilds its mobility from scratch.

Robotics lacks a mobility foundation.

The underlying nature of mobility is much more similar across all mobile systems, requiring the ability to understand the surrounding space, identify feasible motion regions, reason about obstacles and constraints, plan safe trajectories, and use broader contextual information to guide local decisions. At this level, mobility should have the potential to become a shared capability for all robots.

We are building MobilityX.

MobilityX is a body-agnostic mobility foundation model. We take mobility data already accumulated in autonomous driving and adjacent domains, and translate it into a production-grade foundation that enables newly designed robots to acquire mobility intelligence at substantially lower cost.

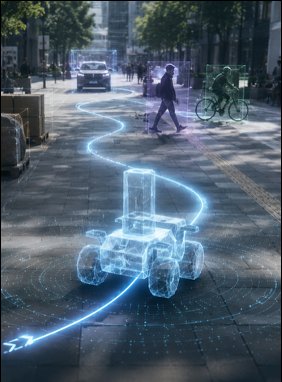

MobilityX 1.0, in action.

Demonstration of three mobility transitions in Mcity World Model, World Labs traversal, and dynamic obstacle avoidance.